Sources:

https://hpbn.co/transport-layer-security-tls/

What Is TLS/SSL?

One problem when you administer a network is securing data that is being sent between applications across an untrusted network. You can use TLS/SSL to authenticate servers and clients and then use it to encrypt messages between the authenticated parties.

The Transport Layer Security (TLS) protocol, Secure Sockets Layer (SSL) protocol, versions 2.0 and 3.0, and the Private Communications Transport (PCT) protocol are based on public key cryptography. The Security Channel (Schannel) authentication protocol suite provides these protocols. All Schannel protocols use a client/server model.

In the authentication process, a TLS/SSL client sends a message to a TLS/SSL server, and the server responds with the information that the server needs to authenticate itself. The client and server perform an additional exchange of session keys, and the authentication dialog ends. When authentication is completed, SSL-secured communication can begin between the server and the client using the symmetric encryption keys that are established during the authentication process.

For servers to authenticate to clients, TLS/SSL does not require server keys to be stored on domain controllers or in a database, such as the Microsoft Active Directory directory service. Clients confirm the validity of a server’s credentials with a trusted root certification authority’s (CA’s) certificates, which are loaded when you install Microsoft Windows Server 2003. Therefore, unless user authentication is required by the server, users do not need to establish accounts before they create a secure connection with a server.

The SSL protocol was originally developed at Netscape to enable ecommerce transaction security on the Web, which required encryption to protect customers’ personal data, as well as authentication and integrity guarantees to ensure a safe transaction. To achieve this, the SSL protocol was implemented at the application layer, directly on top of TCP (Figure 4-1), enabling protocols above it (HTTP, email, instant messaging, and many others) to operate unchanged while providing communication security when communicating across the network.

When SSL is used correctly, a third-party observer can only infer the connection endpoints, type of encryption, as well as the frequency and an approximate amount of data sent, but cannot read or modify any of the actual data.

TLS was designed to operate on top of a reliable transport protocol such as TCP. However, it has also been adapted to run over datagram protocols such as UDP. The Datagram Transport Layer Security (DTLS) protocol, defined in RFC 6347, is based on the TLS protocol and is able to provide similar security guarantees while preserving the datagram delivery model.

Differences between TLS and SSL

Although there are some slight differences between SSL 3.0 and TLS 1.0, this reference refers to the protocol as TLS/SSL.

Note

- Although their differences are minor, TLS 1.0 and SSL 3.0 are not interchangeable. If the same protocol is not supported by both parties, the parties must negotiate a common protocol to communicate successfully.

TLS Enhancements to SSL

- The keyed-Hashing for Message Authentication Code (HMAC) algorithm replaces the SSL Message Authentication Code (MAC) algorithm.

HMAC produces more secure hashes than the MAC algorithm. The HMAC produces an integrity check value as the MAC does, but with a hash function construction that makes the hash much harder to break. For more information about the HMAC, see “Hash Algorithms in The Handshake Layer in TLS/SSL Architecture” in How TLS/SSL Works. - TLS is standardized in RFC 2246.

- Many new alert messages are added.

- In TLS, it is not always necessary to include certificates all the way back to the root CA. You can use an intermediary authority.

- TLS specifies padding block values that are used with block cipher algorithms. RC4, which is used by Microsoft, is a streaming cipher, so this modification is not relevant.

- Fortezza algorithms are not included in the TLS RFC, because they are not open for public review. (This is Internet Engineering Task Force (IETF) policy.)

- Minor differences exist in some message fields.

Benefits of TLS/SSL

TLS/SSL provides numerous benefits to clients and servers over other methods of authentication, including:

- Strong authentication, message privacy, and integrity

- Interoperability

- Algorithm flexibility

- Ease of deployment

- Ease of use

Strong authentication, message privacy, and integrity

TLS/SSL can help to secure transmitted data using encryption. TLS/SSL also authenticates servers and, optionally, authenticates clients to prove the identities of parties engaged in secure communication. It also provides data integrity through an integrity check value. In addition to protecting against data disclosure, the TLS/SSL security protocol can be used to help protect against masquerade attacks, man-in-the-middle or bucket brigade attacks, rollback attacks, and replay attacks.

Interoperability

TLS/SSL works with most Web browsers, including Microsoft Internet Explorer and Netscape Navigator, and on most operating systems and Web servers, including the Microsoft Windows operating system, UNIX, Novell, Apache (version 1.3 and later), Netscape Enterprise Server, and Sun Solaris. It is often integrated in news readers, LDAP servers, and a variety of other applications.

Algorithm flexibility

TLS/SSL provides options for the authentication mechanisms, encryption algorithms, and hashing algorithms that are used during the secure session.

Note

- Data can be encrypted and decrypted, but you cannot reverse engineer a hash. Hashing is a one-way process. Running the process backward will not create the original data. This is why a new hash is computed and then compared to the sent hash.

Ease of deployment

Many applications use TLS/SSL transparently on a Windows Server 2003 operating system. You can use TLS for more secure browsing when you are using Internet Explorer and Internet Information Services (IIS) and, if the server already has a server certificate installed, you only have to select the check box.

Ease of use

Because you implement TLS/SSL beneath the application layer, most of its operations are completely invisible to the client. This allows the client to have little or no knowledge of the security of communications and still be protected from attackers.

Limitations of TLS/SSL

There are a few limitations to using TLS/SSL, including:

Increased processor load

This is the most significant limitation to implementing TLS/SSL. Cryptography, specifically public key operations, is CPU-intensive. As a result, performance varies when you are using SSL. Unfortunately, there is no way to know how much performance you will lose. The performance varies, depending on how often connections are established and how long they last. TLS uses the greatest resources while it is setting up connections.

Administrative overhead

A TLS/SSL environment is complex and requires maintenance; the system administrator must configure the system and manage certificates.

Encryption, Authentication, and Integrity

The TLS protocol is designed to provide three essential services to all applications running above it: encryption, authentication, and data integrity. Technically, you are not required to use all three in every situation. You may decide to accept a certificate without validating its authenticity, but you should be well aware of the security risks and implications of doing so. In practice, a secure web application will leverage all three services.

- Encryption

- A mechanism to obfuscate what is sent from one host to another.

- Authentication

- A mechanism to verify the validity of provided identification material.

- Integrity

- A mechanism to detect message tampering and forgery.

- In order to establish a cryptographically secure data channel, the connection peers must agree on which ciphersuites will be used and the keys used to encrypt the data. The TLS protocol specifies a well-defined handshake sequence to perform this exchange, which we will examine in detail in TLS Handshake. The ingenious part of this handshake, and the reason TLS works in practice, is due to its use of public key cryptography (also known as asymmetric key cryptography), which allows the peers to negotiate a shared secret key without having to establish any prior knowledge of each other, and to do so over an unencrypted channel.

- As part of the TLS handshake, the protocol also allows both peers to authenticate their identity. When used in the browser, this authentication mechanism allows the client to verify that the server is who it claims to be (e.g., your bank) and not someone simply pretending to be the destination by spoofing its name or IP address. This verification is based on the established chain of trust — see Chain of Trust and Certificate Authorities. -- Server Auth. In addition, the server can also optionally verify the identity of the client — e.g., a company proxy server can authenticate all employees, each of whom could have their own unique certificate signed by the company -- Client Auth

- Finally, with encryption and authentication in place, the TLS protocol also provides its own message framing mechanism and signs each message with a message authentication code (MAC). The MAC algorithm is a one-way cryptographic hash function (effectively a checksum), the keys to which are negotiated by both connection peers. Whenever a TLS record is sent, a MAC value is generated and appended for that message, and the receiver is then able to compute and verify the sent MAC value to ensure message integrity and authenticity.

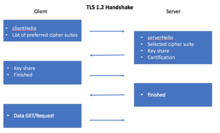

- TLS Handshake

- Before the client and the server can begin exchanging application data over TLS, the encrypted tunnel must be negotiated: the client and the server must agree on the version of the TLS protocol, choose the ciphersuite, and verify certificates if necessary. Unfortunately, each of these steps requires new packet roundtrips (Figure 4-2) between the client and the server, which adds startup latency to all TLS connections.

0 ms- TLS runs over a reliable transport (TCP), which means that we must first complete the TCP three-way handshake, which takes one full roundtrip.

56 ms- With the TCP connection in place, the client sends a number of specifications in plain text, such as the version of the TLS protocol it is running, the list of supported ciphersuites, and other TLS options it may want to use.

84 ms- The server picks the TLS protocol version for further communication, decides on a ciphersuite from the list provided by the client, attaches its certificate, and sends the response back to the client. Optionally, the server can also send a request for the client’s certificate and parameters for other TLS extensions.

112 ms- Assuming both sides are able to negotiate a common version and cipher, and the client is happy with the certificate provided by the server, the client initiates either the RSA or the Diffie-Hellman key exchange, which is used to establish the symmetric key for the ensuing session.

140 ms- The server processes the key exchange parameters sent by the client, checks message integrity by verifying the MAC, and returns an encrypted

Finishedmessage back to the client. 168 ms- The client decrypts the message with the negotiated symmetric key, verifies the MAC, and if all is well, then the tunnel is established and application data can now be sent.

- As the above exchange illustrates, new TLS connections require two roundtrips for a "full handshake"—that’s the bad news. However, in practice, optimized deployments can do much better and deliver a consistent 1-RTT TLS handshake:

- False Start is a TLS protocol extension that allows the client and server to start transmitting encrypted application data when the handshake is only partially complete—i.e., once

ChangeCipherSpecandFinishedmessages are sent, but without waiting for the other side to do the same. This optimization reduces handshake overhead for new TLS connections to one roundtrip; see Enable TLS False Start. - If the client has previously communicated with the server, an "abbreviated handshake" can be used, which requires one roundtrip and also allows the client and server to reduce the CPU overhead by reusing the previously negotiated parameters for the secure session; see TLS Session Resumption.

The combination of both of the above optimizations allows us to deliver a consistent 1-RTT TLS handshake for new and returning visitors, plus computational savings for sessions that can be resumed based on previously negotiated session parameters. Make sure to take advantage of these optimizations in your deployments. - One of the design goals for TLS 1.3 is to reduce the latency overhead for setting up the secure connection: 1-RTT for new, and 0-RTT for resumed sessions!

- A cipher suite is a set of algorithms that help secure a network connection that uses Transport Layer Security (TLS) or Secure Socket Layer (SSL). The set of algorithms that cipher suites usually contain include: a key exchange algorithm, a bulk encryption algorithm, and a Message Authentication Code (MAC) algorithm. Cipher suites are used to coordinate between a client and server on which security algorithms to use when sending and receiving information from each other when using TLS and SSL.

- Each cipher suite has a unique name that is used to identify it and to describe the algorithmic contents of it. Each segment in a cipher suite name stands for a different algorithm or protocol. An example of a cipher suite name: TLS_RSA_WITH_3DES_EDE_CBC_SHAThe meaning of this name is:

- TLS defines the protocol that this cipher suite is for; it will usually be TLS.

- RSA indicates the key exchange algorithm being used. The key exchange algorithm is used to determine if and how the client and server will authenticate during the handshake.[8]

- 3DES_EDE_CBC indicates the block cipher being used to encrypt the message stream.[9]

- SHA indicates the message authentication algorithm which is used to authenticate a message.[10]

TLS 1.0 - 1.2 Handshake[edit]

This protocol first starts by sending a clientHello message to the server that includes the version of TLS being used and a list of cipher suites in the order of the client’s preference. In response, the server sends a serverhello message that includes the chosen cipher suite and the session ID. Next the server sends a digital certificate to verify its identity to the client. The server may also request a client’s digital certification if needed.If the client and server are not using pre-shared keys, the client then sends an encrypted message to the server that enables the client and the server to be able to compute which secret key will be used during exchanges.After successfully verifying the authentication of the server and, if needed, exchanging the secret key, the client sends a finished message to signal that it is done with the handshake process. After receiving this message, the server sends a finished message that confirms that the handshake is complete. Now the client and the server are coordinated with which cipher suite to use when communicating with each other.- Current Draft Proposal for TLS 1.3 Handshake

- If two machines are corresponding over TLS 1.3, they coordinate which cipher suite to use by using the TLS 1.3 Handshake Protocol. The handshake in TLS 1.3 was condensed to only one round trip compared to the two round trips required in previous versions of TLS/SSL.First the client sends a clientHello message to the server that contains a list of supported ciphers in order of the client's preference and makes a guess on what key algorithm is being used so that it can send a secret key to share if needed.By making a guess on what key algorithm that is being used it eliminates a round trip. After receiving the clientHello, the server sends a serverHello with its key, a certificate, the chosen cipher suite and the finished message.After the client receives the server's finished message it now is coordinated with the server on which cipher suite to use.[2]

- Vulnerabilities

- A cipher suite is as secure as the algorithms that it contains. If the version of encryption or authentication algorithm in a cipher suite have known vulnerabilities the cipher suite and TLS connection is then vulnerable. Therefore, a common attack against TLS and cipher suites is known as a Down Grade Attack. A downgrade in TLS occurs when a modern client connects to legacy servers that are using older versions of TLS or SSL.When initiating a handshake, the modern client will offer the highest protocol that it supports. If the connection fails, it will automatically retry again with a lower protocol such as TLS 1.0 or SSL 3.0 until the handshake is successful with the server. The purpose of downgrading is so that new versions of TLS are compatible with older versions. However, it is possible for an adversary to take advantage of this feature and make it so that a client will automatically downgrade to a version of TLS or SSL that supports cipher suites with algorithms that are known for weak security and vulnerabilities.[18] This has resulted in attacks such as POODLE.One way to avoid this security flaw is to disable the ability of a server or client to be able to downgrade to SSL3.0. The shortcoming with this fix is that it will make it so that some legacy hardware can not be accessed by newer hardware. If SSL 3.0 support is needed for legacy hardware, there is an approved TLS_FALLBACK_SCSV cipher suite which verifies that downgrades are not triggered for malicious intentions.

RSA, Diffie-Hellman and Forward Secrecy

Due to a variety of historical and commercial reasons the RSA handshake has been the dominant key exchange mechanism in most TLS deployments: the client generates a symmetric key, encrypts it with the server’s public key, and sends it to the server to use as the symmetric key for the established session. In turn, the server uses its private key to decrypt the sent symmetric key and the key-exchange is complete. From this point forward the client and server use the negotiated symmetric key to encrypt their session.The RSA handshake works, but has a critical weakness: the same public-private key pair is used both to authenticate the server and to encrypt the symmetric session key sent to the server. As a result, if an attacker gains access to the server’s private key and listens in on the exchange, then they can decrypt the the entire session. Worse, even if an attacker does not currently have access to the private key, they can still record the encrypted session and decrypt it at a later time once they obtain the private key.By contrast, the Diffie-Hellman key exchange allows the client and server to negotiate a shared secret without explicitly communicating it in the handshake: the server’s private key is used to sign and verify the handshake, but the established symmetric key never leaves the client or server and cannot be intercepted by a passive attacker even if they have access to the private key.- Best of all, Diffie-Hellman key exchange can be used to reduce the risk of compromise of past communication sessions: we can generate a new "ephemeral" symmetric key as part of each and every key exchange and discard the previous keys. As a result, because the ephemeral keys are never communicated and are actively renegotiated for each the new session, the worst-case scenario is that an attacker could compromise the client or server and access the session keys of the current and future sessions. However, knowing the private key, or the current ephemeral key, does not help the attacker decrypt any of the previous sessions!

- Combined, the use of Diffie-Hellman key exchange and ephemeral sessions keys enables "perfect forward secrecy" (PFS): the compromise of long-term keys (e.g. server’s private key) does not compromise past session keys and does not allow the attacker to decrypt previously recorded sessions. A highly desirable property, to say the least!

Performance of Public vs. Symmetric Key Cryptography

Public-key cryptography is used only during initial setup of the TLS tunnel: the certificates are authenticated and the key exchange algorithm is executed.Symmetric key cryptography, which uses the established symmetric key is then used for all further communication between the client and the server within the session. This is done, in large part, to improve performance—public key cryptography is much more computationally expensive. To illustrate the difference, if you have OpenSSL installed on your computer, you can run the following tests:$> openssl speed ecdh$> openssl speed aes

Note that the units between the two tests are not directly comparable: the Elliptic Curve Diffie-Hellman (ECDH) test provides a summary table of operations per second for different key sizes, while AES performance is measured in bytes per second. Nonetheless, it should be easy to see that the ECDH operations are much more computationally expensive.The exact performance numbers vary significantly based on used hardware, number of cores, TLS version, server configuration, and other factors. Don’t fall for an outdated benchmark! Always run the performance tests on your own hardware and refer to Reduce Computational Costs for additional context.- Server Name Indication (SNI)

- An encrypted TLS tunnel can be established between any two TCP peers: the client only needs to know the IP address of the other peer to make the connection and perform the TLS handshake. However, what if the server wants to host multiple independent sites, each with its own TLS certificate, on the same IP address — how does that work? Trick question; it doesn’t.To address the preceding problem, the Server Name Indication (SNI) extension was introduced to the TLS protocol, which allows the client to indicate the hostname the client is attempting to connect to as part of the TLS handshake. In turn, the server is able to inspect the SNI hostname sent in the

ClientHellomessage, select the appropriate certificate, and complete the TLS handshake for the desired host.

Session Identifiers

The first Session Identifiers (RFC 5246) resumption mechanism was introduced in SSL 2.0, which allowed the server to create and send a 32-byte session identifier as part of itsServerHellomessage during the full TLS negotiation we saw earlier. With the session ID in place, both the client and server can store the previously negotiated session parameters—keyed by session ID—and reuse them for a subsequent session.Specifically, the client can include the session ID in theClientHellomessage to indicate to the server that it still remembers the negotiated cipher suite and keys from previous handshake and is able to reuse them. In turn, if the server is able to find the session parameters associated with the advertised ID in its cache, then an abbreviated handshake (Figure 4-3) can take place. Otherwise, a full new session negotiation is required, which will generate a new session ID.

Figure 4-3. Abbreviated TLS handshake protocol

Leveraging session identifiers allows us to remove a full roundtrip, as well as the overhead of public key cryptography, which is used to negotiate the shared secret key. This allows a secure connection to be established quickly and with no loss of security, since we are reusing the previously negotiated session data.- Session resumption is an important optimization both for HTTP/1.x and HTTP/2 deployments. The abbreviated handshake eliminates a full roundtrip of latency and significantly reduces computational costs for both sides.In fact, if the browser requires multiple connections to the same host (e.g. when HTTP/1.x is in use), it will often intentionally wait for the first TLS negotiation to complete before opening additional connections to the same server, such that they can be "resumed" and reuse the same session parameters. If you’ve ever looked at a network trace and wondered why you rarely see multiple same-host TLS negotiations in flight, that’s why!

Chain of Trust and Certificate Authorities

Authentication is an integral part of establishing every TLS connection. After all, it is possible to carry out a conversation over an encrypted tunnel with any peer, including an attacker, and unless we can be sure that the host we are speaking to is the one we trust, then all the encryption work could be for nothing. To understand how we can verify the peer’s identity, let’s examine a simple authentication workflow between Alice and Bob:- Both Alice and Bob generate their own public and private keys.

- Both Alice and Bob hide their respective private keys.

- Alice shares her public key with Bob, and Bob shares his with Alice.

- Alice generates a new message for Bob and signs it with her private key.

- Bob uses Alice’s public key to verify the provided message signature.

Trust is a key component of the preceding exchange. Specifically, public key encryption allows us to use the public key of the sender to verify that the message was signed with the right private key, but the decision to approve the sender is still one that is based on trust. In the exchange just shown, Alice and Bob could have exchanged their public keys when they met in person, and because they know each other well, they are certain that their exchange was not compromised by an impostor—perhaps they even verified their identities through another, secret (physical) handshake they had established earlier!Next, Alice receives a message from Charlie, whom she has never met, but who claims to be a friend of Bob’s. In fact, to prove that he is friends with Bob, Charlie asked Bob to sign his own public key with Bob’s private key and attached this signature with his message (Figure 4-4). In this case, Alice first checks Bob’s signature of Charlie’s key. She knows Bob’s public key and is thus able to verify that Bob did indeed sign Charlie’s key. Because she trusts Bob’s decision to verify Charlie, she accepts the message and performs a similar integrity check on Charlie’s message to ensure that it is, indeed, from Charlie.

Figure 4-4. Chain of trust for Alice, Bob, and Charlie

What we have just done is established a chain of trust: Alice trusts Bob, Bob trusts Charlie, and by transitive trust, Alice decides to trust Charlie. As long as nobody in the chain is compromised, this allows us to build and grow the list of trusted parties.Authentication on the Web and in your browser follows the exact same process as shown. Which means that at this point you should be asking: whom does your browser trust, and whom do you trust when you use the browser? There are at least three answers to this question:-

- Manually specified certificates

- Every browser and operating system provides a mechanism for you to manually import any certificate you trust. How you obtain the certificate and verify its integrity is completely up to you.

- Certificate authorities

- A certificate authority (CA) is a trusted third party that is trusted by both the subject (owner) of the certificate and the party relying upon the certificate.

- The browser and the operating system

- Every operating system and most browsers ship with a list of well-known certificate authorities. Thus, you also trust the vendors of this software to provide and maintain a list of trusted parties.

- In practice, it would be impractical to store and manually verify each and every key for every website (although you can, if you are so inclined). Hence, the most common solution is to use certificate authorities (CAs) to do this job for us (Figure 4-5): the browser specifies which CAs to trust (root CAs), and the burden is then on the CAs to verify each site they sign, and to audit and verify that these certificates are not misused or compromised. If the security of any site with the CA’s certificate is breached, then it is also the responsibility of that CA to revoke the compromised certificate.

Figure 4-5. CA signing of digital certificates Certificate Transparency

Every operating system vendor and every browser provide a public listing of all the certificate authorities they trust by default. Use your favorite search engine to find and investigate these lists. In practice, you’ll find that most systems rely on hundreds of trusted certificate authorities, which is also a common complaint against the system: the large number of trusted CAs creates a large attack surface area against the chain of trust in your browser.The good news is, the Certificate Transparency project is working to address these flaws by providing a framework—a public log—for monitoring and auditing of issuance of all new certificates. Visit the project website to learn more.Certificate Revocation

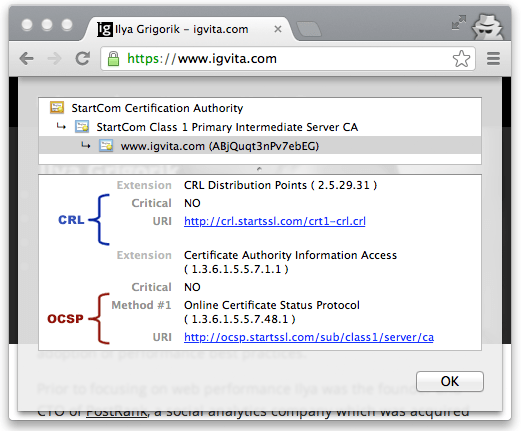

Occasionally the issuer of a certificate will need to revoke or invalidate the certificate due to a number of possible reasons: the private key of the certificate has been compromised, the certificate authority itself has been compromised, or due to a variety of more benign reasons such as a superseding certificate, change in affiliation, and so on. To address this, the certificates themselves contain instructions (Figure 4-7) on how to check if they have been revoked. Hence, to ensure that the chain of trust is not compromised, each peer can check the status of each certificate by following the embedded instructions, along with the signatures, as it verifies the certificate chain.

Figure 4-7. CRL and OCSP instructions for igvita.com (Google Chrome, v25)

Certificate Revocation List (CRL)

Certificate Revocation List (CRL) is defined by RFC 5280 and specifies a simple mechanism to check the status of every certificate: each certificate authority maintains and periodically publishes a list of revoked certificate serial numbers. Anyone attempting to verify a certificate is then able to download the revocation list, cache it, and check the presence of a particular serial number within it—if it is present, then it has been revoked.This process is simple and straightforward, but it has a number of limitations:- The growing number of revocations means that the CRL list will only get longer, and each client must retrieve the entire list of serial numbers.

- There is no mechanism for instant notification of certificate revocation—if the CRL was cached by the client before the certificate was revoked, then the CRL will deem the revoked certificate valid until the cache expires.

- The need to fetch the latest CRL list from the CA may block certificate verification, which can add significant latency to the TLS handshake.

- The CRL fetch may fail due to variety of reasons, and in such cases the browser behavior is undefined. Most browsers treat such cases as "soft fail", allowing the verification to proceed—yes, yikes

Online Certificate Status Protocol (OCSP)

To address some of the limitations of the CRL mechanism, the Online Certificate Status Protocol (OCSP) was introduced by RFC 2560, which provides a mechanism to perform a real-time check for status of the certificate. Unlike the CRL file, which contains all the revoked serial numbers, OCSP allows the client to query the CA’s certificate database directly for just the serial number in question while validating the certificate chain.As a result, the OCSP mechanism consumes less bandwidth and is able to provide real-time validation. However, the requirement to perform real-time OCSP queries creates its own set of problems:- The CA must be able to handle the load of the real-time queries.

- The CA must ensure that the service is up and globally available at all times.

- Real-time OCSP requests may impair the client’s privacy because the CA knows which sites the client is visiting.

- The client must block on OCSP requests while validating the certificate chain.

- The browser behavior is, once again, undefined and typically results in a "soft fail" if the OCSP fetch fails due to a network timeout or other errors.

OCSP Stapling

For the reasons listed above, neither CRL or OSCP revocation mechanisms offer the security and performance guarantees that we desire for our applications. However, don’t despair, because OCSP stapling (RFC 6066, "Certificate Status Request" extension) addresses most of the issues we saw earlier by allowing the validation to be performed by the server and be sent ("stapled") as part of the TLS handshake to the client:- Instead of the client making the OCSP request, it is the server that periodically retrieves the signed and timestamped OCSP response from the CA.

- The server then appends (i.e. "staples") the signed OCSP response as part of the TLS handshake, allowing the client to validate both the certificate and the attached OCSP revocation record signed by the CA.

This role reversal is secure, because the stapled record is signed by the CA and can be verified by the client, and offers a number of important benefits:- The client does not leak its navigation history.

- The client does not have to block and query the OCSP server.

- The client may "hard-fail" revocation handling if the server opts-in (by advertising the OSCP "Must-Staple" flag) and the verification fails.

In short, to get both the best security and performance guarantees, make sure to configure and test OCSP stapling on your servers.- https://www.ssllabs.com/ssltest/

- https://www.certificate-transparency.org/

- https://en.wikipedia.org/wiki/Diffie%E2%80%93Hellman_key_exchange

- https://tools.ietf.org/html/rfc7258

- https://www.iab.org/2014/11/14/iab-statement-on-internet-confidentiality/

- https://letsencrypt.org/

- https://technet.microsoft.com/en-us/library/cc779826(v=ws.10).aspx

- https://technet.microsoft.com/en-us/library/cc783349(v=ws.10).aspx

- https://en.wikipedia.org/wiki/Elliptic-curve_Diffie%E2%80%93Hellman

- https://en.wikipedia.org/wiki/Elliptic_Curve_Digital_Signature_Algorithm

- https://en.wikipedia.org/wiki/Block_cipher

Comments

Post a Comment